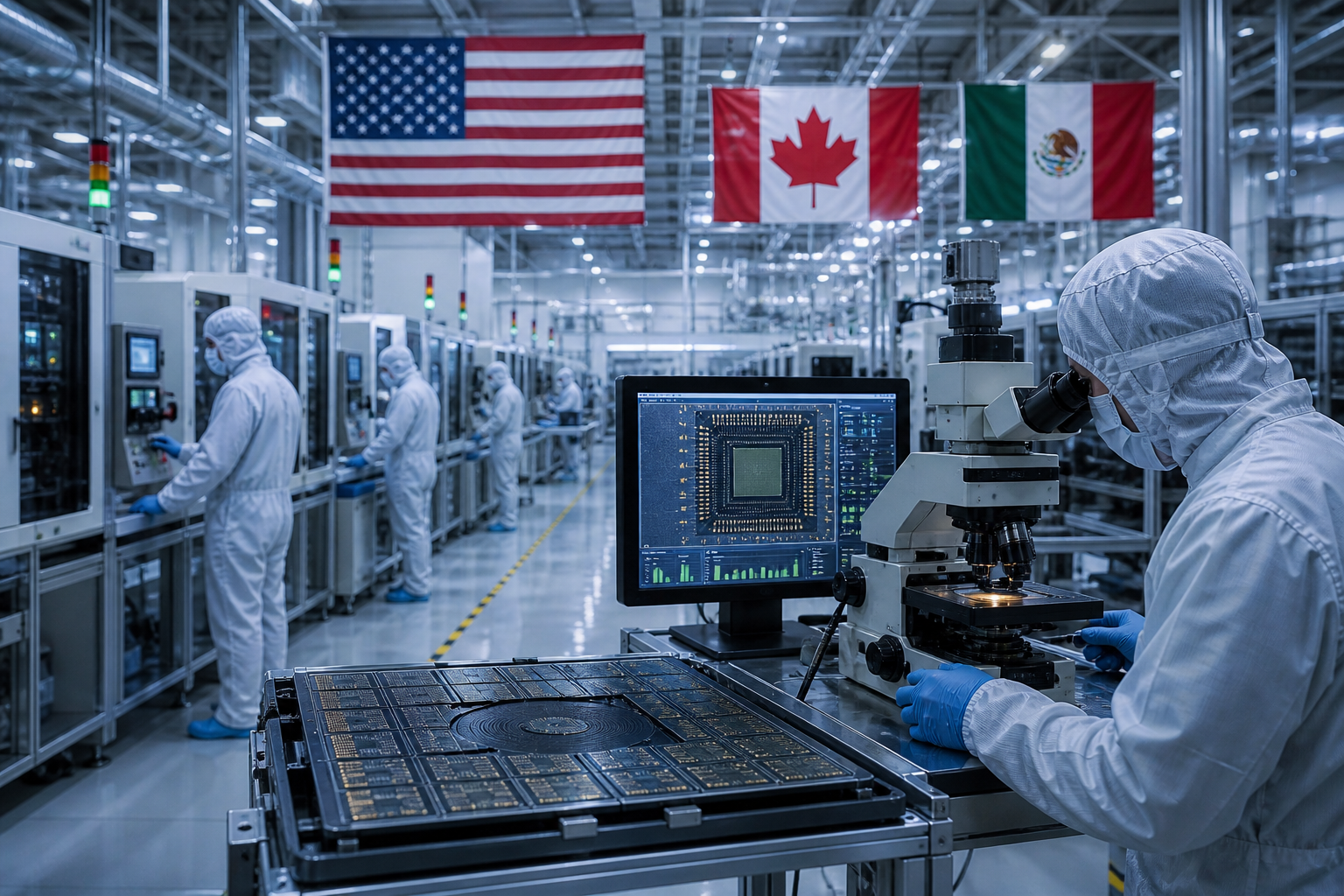

The United States is taking a proactive step in the realm of artificial intelligence (AI) safety by establishing an institute dedicated to evaluating the known and emerging risks associated with “frontier” AI models. Secretary of Commerce Gina Raimondo unveiled this initiative during her speech at the AI Safety Summit in Britain, emphasizing the need for collaboration between academia, industry, and the government to address these challenges effectively.

In her address, Raimondo highlighted the importance of collective effort, stating, “I will almost certainly be calling on many of you in the audience who are in academia and industry to be part of this consortium. We can’t do it alone; the private sector must step up.” This collaborative approach signifies the acknowledgment of the complex and evolving nature of AI and the necessity of multiple stakeholders coming together to ensure its safe development and deployment.

Furthermore, Raimondo expressed her commitment to establishing a formal partnership between the new U.S. AI safety institute and the United Kingdom Safety Institute. Such international collaboration demonstrates the global recognition of the significance of AI safety and the need for coordinated efforts to address it effectively.

The newly launched effort will fall under the jurisdiction of the National Institute of Standards and Technology (NIST) and is set to lead the U.S. government’s endeavors related to AI safety, with a particular focus on evaluating advanced AI models. This institute’s mission is extensive and encompasses various aspects of AI safety, including the development of standards for safety, security, and testing of AI models, as well as the authentication of AI-generated content. Additionally, it will provide testing environments where researchers can assess emerging AI risks and address known impacts.

This substantial step is in accordance with President Joe Biden’s recent executive decree regarding artificial intelligence. The order, signed on Monday, mandates that creators of AI systems with potential implications for U.S. national security, the economy, public health, or safety are obliged to disclose the outcomes of safety assessments to the U.S. government before making them publicly available. This obligation is grounded in the framework of the Defense Production Act, reaffirming the government’s dedication to safeguarding national security and the well-being of the public in the realm of AI technology.

The executive order also directs various government agencies to establish standards for AI testing. These standards will not only encompass safety but will also address potential risks related to chemical, biological, radiological, nuclear, and cybersecurity domains. The comprehensive nature of these directives demonstrates the government’s intent to regulate AI effectively while considering a broad spectrum of risks and challenges.

In the rapidly evolving field of AI, where breakthroughs and innovations occur at a rapid pace, the need for robust safety measures is paramount. AI models, especially those at the frontier, have the potential to influence numerous aspects of society, including national security, the economy, and public welfare. Ensuring their safety is not only a technological challenge but also a matter of public policy and governance.

The U.S. government’s commitment to engaging academia, industry, and international partners in addressing AI safety is a significant step forward. Collaborative efforts are essential in addressing the multifaceted challenges posed by AI, and the involvement of a diverse set of stakeholders is a testament to the recognition of AI’s far-reaching implications.

As this new AI safety institute takes shape, it is expected to play a pivotal role in not only evaluating AI models but also in setting the standards and guidelines that will shape the responsible development and deployment of AI technologies in the United States and beyond. The establishment of these safeguards is an essential aspect of harnessing the potential of AI while mitigating its risks, ultimately contributing to a safer and more secure AI-driven future.